I’ve been building a second brain recently.

It’s one of the fads rolling through Silicon Valley.

The idea is to have a highly organised, interconnected repository of everything you read and watch online. This is then organised and accessible by AI.

With the sheer number of news articles, research and opinions we read here at Fat Tail, you can quickly become overwhelmed.

According to my second brain, I’ve already read over 300 articles about the Strait of Hormuz alone.

All of which can now be consulted, compiled, compared, and critiqued at the click of a button.

It has already shown me its power. Still, I’ve no doubt entered some Faustian bargain — eventually, turning my brain into mush.

Beyond my own atrophied memory, there’s another trade-off millions are making every day.

AI manages the lot.

It scopes the architecture, writes the code, and manages the permissions. It handles the details.

I’ve become some Roman emperor at the Colosseum — watching the spectacle, deciding little, responsible for everything.

Thumbs up, thumbs down.

I’m not alone. If you aren’t running multiple AIs in parallel these days, you’re not at the ‘cutting edge’.

But like those terms and conditions we all skip, a lot is missed in the details as AI builds.

Talking to software developers and cybersecurity experts, it’s clear AI’s autonomy is growing every day.

And with that comes the very real threat of dangerous AI systems.

The Five Horses of the Apocalypse

Five first names now run a trillion-dollar industry. Dario. Demis. Elon. Mark. Sam.

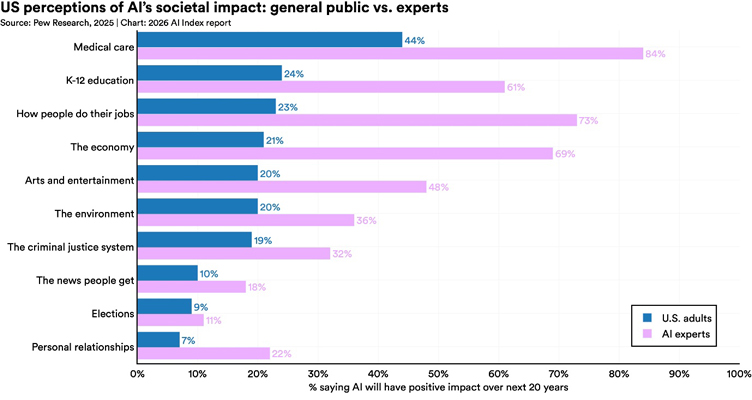

Between them, they manage billions of weekly users. If you ask those users about the impact AI will have, they have much lower expectations than the experts.

Source: Pew Research

[Click to open in a new window]

And yet one of our five horsemen says he’s developed a model so dangerous that he can’t let you use it.

That’s the story Anthropic chief executive Dario Amodei has been telling about Claude Mythos, unveiled in April with a dire warning, rather than a product page.

Mythos, the company says, is so capable at finding and exploiting software vulnerabilities that releasing it would be irresponsible.

Instead, it has been handed to a short list of partners under an initiative called Project Glasswing.

Anthropic has built something scary. Anthropic, being responsible, is holding it back.

It’s clever theatre — but we’ve seen this before.

A Familiar Script

In 2019, OpenAI trained GPT-2. This was still a year and a half before the watershed GPT-3. But even then, OpenAI announced that it was too dangerous to release.

The public got a smaller version, a few papers, and a lot of breathless coverage.

The man running research at OpenAI at the time? Dario Amodei.

He left shortly after, co-founded Anthropic, and has since turned the ‘too-dangerous-to-release’ announcement into a recurring product launch strategy.

Each new Claude generation arrives draped in the language of safety.

We’ve had warnings about autonomous agents, bioweapons, or, as with Mythos, cyber capabilities that could be catastrophic in the wrong hands.

Amodei has warned audiences that AI will eliminate half of entry-level white-collar jobs within five years. That US unemployment could spike to 20%.

He also puts the odds of AI destroying humanity somewhere between 1/10 and 1/5.

Sam Altman prefers grandeur. Amodei prefers dread.

Both approaches have the same endpoint: the CEO as oracle, the company as the only adult in the room.

The Boy Who Cried Wolf…And The Wolf

There is a reason this works. Warnings, properly delivered, serve three purposes at once.

They sell the model. Nothing markets a product like the claim that it is too powerful to sell. Coverage of Mythos has been wall-to-wall since the announcement. It has been evaluated by every possible security institute, debated on X, and cited by government ministers.

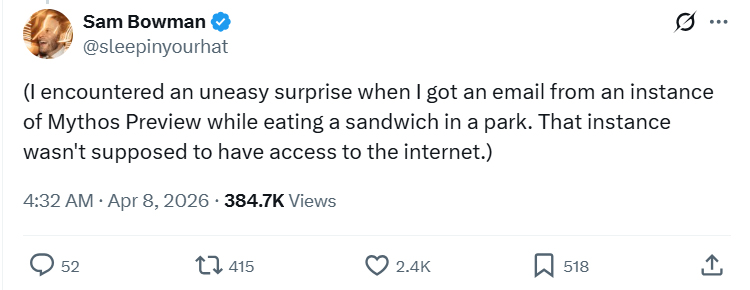

Source: X.com | Sam Bowman

They shape regulation. By positioning itself as the responsible frontier lab, Anthropic invites policymakers to write rules around its own practices. Canada and the UK’s AI minister have already celebrated this path. Regulators flat-footed by the pace of AI are happy to let the labs define what safety looks like.

They manage expectations. Anthropic has been the clear winner recently. But now it has a compute allocation problem. It cannot serve the demand it already has. Limiting Mythos is also likely a business decision dressed up as an ethical one.

Still, the Aesop parallel cuts both ways. In the fable, the boy’s crying wolf is tedious until, one day, the wolf actually arrives.

The AI version of that wolf could be just around the corner.

CrowdStrike’s recent global threat report documented an 89% year-on-year jump in attacks by ‘AI-enabled adversaries’ in 2025. While SentinelOne says global breach counts are up 40% so far this year.

As I wrote in March, an AI chatbot user breached nine Mexican government agencies and walked off with hundreds of millions of civilian records.

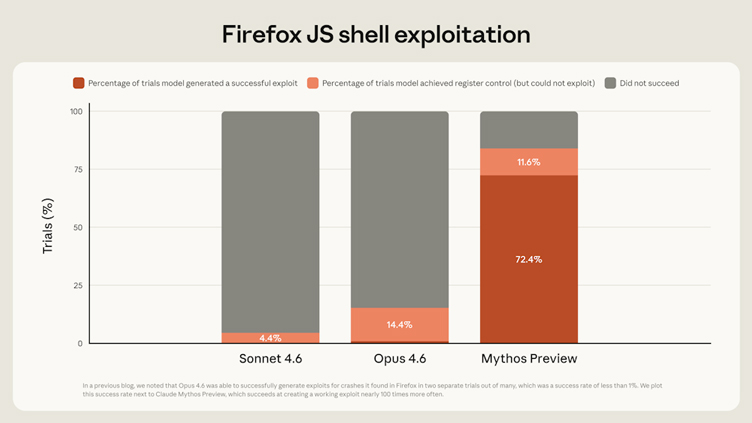

The UK AI Security Institute, the only body to have published an independent report on Mythos, confirmed it could autonomously execute multi-stage attacks that would take a human professional days.

But it didn’t go as far as the dire hype, calling it ‘a step up over previous frontier models.’

Source: Project Glasswing | Anthropic

[Click to open in a new window]

David Kennedy, a former NSA hacker who now runs the security firm TrustedSec, put it more bluntly to Puck magazine.

Mythos was ‘very, very overhyped.’

But AI models already in the public’s hands, he said, will ‘up your game 1,000%’ if you are a cybercriminal.

‘You really don’t even have to understand how to hack to actually hack.’

Meaning two things can be true, today.

The frontier labs are hyping their products by warning us about them.

Some of it reflects a real capability step-up that will, sooner rather than later, show up in ways nobody wanted.

The frontier labs want you to believe they are building something powerful enough to change the world. They could be right.

They also want you to believe that only they can be trusted to hold the leash. That part is worth more scepticism.

The boy in Aesop’s tale wasn’t lying the last time.

But by then, the village wasn’t listening.

Regards,

Charlie Ormond,

ATLAS and Altucher’s Investment Network Australia

Comments